To kill a centrifuge

The following is an excerpt from “To kill a centrifuge” for the busy reader, without any graphics, and no background material. If you do like the excerpt then check out the full text if you can, because the images from Natanz are important and interesting.

Prologue: A Textbook Example of Cyber Warfare

Overpressure Attack: Silent Hijack of the Crown Jewels

Rotor Speed Attack: Pushing the Envelope

Analysis: The Dynamics of a Cyber Warfare Campaign

Acknowledgements

Andreas Timm, Olli Heinonen, Richard Danzig, and R. Scott Kemp provided valuable feedback in the process of writing this paper. Nevertheless any views expressed are the author’s, not theirs.

Executive Summary

This document summarizes the most comprehensive research on the Stuxnet malware so far: It combines results from reverse engineering the attack code with intelligence on the design of the attacked plant and background information on the attacked uranium enrichment process. It looks at the attack vectors of the two different payloads contained in the malware and especially provides an analysis of the bigger and much more complex payload that was designed to damage centrifuge rotors by overpressure. With both attack vectors viewed in context, conclusions are drawn about the reasoning behind a radical change of tactics between the complex earlier attack and the comparatively simple later attack that tried to manipulate centrifuge rotor speeds. It is reasoned that between 2008 and 2009 the creators of Stuxnet realized that they were on to something much bigger than to delay the Iranian nuclear program: History’s first field experiment in cyber-physical weapon technology. This may explain why in the course of the campaign against Natanz, OPSEC was lossened to the extent that one can speculate that the attackers really were no longer ultimately concerned about being detected or not but rather pushing the envelope.

Another section of this paper is dedicated to the discussion of several popular misconceptions about Stuxnet, most importantly how difficult it would be to use Stuxnet as a blueprint for cyber-physical attacks against critical infrastructure of the United States and their allies. It is pointed out that offensive cyber forces around the world will certainly learn from history’s first true cyber weapon, and it is further explained why nation state resources are not required to launch cyber-physical attacks. It is also explained why conventional infosec wisdom and deterrence does not sufficiently protect against Stuxnet-inspired copycat attacks.

The last section of the paper provides a wealth of plant floor footage that allows for a better understanding of the attack, and it also closes a gap in the research literature on the Iranian nuclear program that so far focused on individual centrifuges rather than on higher-level assemblies such as cascades and cascade units. In addition, intelligence is provided on the instrumentation and control that is a crucial point in understanding Iran’s approach to uranium enrichment.

There is only one reason why we publish this analysis: To help asset owners and governments protect against sophisticated cyber-physical attacks as they will almost definitely occur in the wake of Stuxnet. Public discussion of the subject and corporate strategies on how to deal with it clearly indicate widespread misunderstanding of the attack and its details, not to mention a misunderstanding of how to secure industrial control systems in general. For example, post-Stuxnet mitigation strategies like emphasizing the use of air gaps, anti-virus, and security patches are all indications of a failure to understand how the attack actually worked. By publishing this paper we hope to change this unsatisfactory situation and stimulate a broad discussion on proper mitigation strategies that don’t miss the mark.

Prologue: A Textbook Example of Cyber Warfare

Even three years after being discovered, Stuxnet continues to baffle military strategists, computer security experts, political decision makers, and the general public. The malware marks a clear turning point in the history of cyber security and in military history as well. Its impact for the future will most likely be substantial, therefore we should do our best to understand it properly. The actual outcome at Ground Zero is unclear, if only for the fact that no information is available on how many controllers were actually infected with Stuxnet. Theoretically, any problems at Natanz that showed in 2009 IAEA reports could have had a completely different cause other than Stuxnet. Nevertheless forensic analysis can tell us what the attackers intended to achieve, and how.

But that cannot be accomplished by just understanding computer code and zero-day vulnerabilities. Being a cyber-physical attack, one has to understand the physical part as well – the design features of the plant that was attacked, and of the process parameters of this plant. Different from cyber attacks as we see them every day, a cyber-physical attack involves three layers and their specific vulnerabilities: The IT layer which is used to spread the malware, the control system layer which is used to manipulate (but not disrupt) process control, and finally the physical layer where the actual damage is created. In the case of the cyber attack against Natanz, the vulnerability on the physical layer was the fragility of the fast-spinning centrifuge rotors that was exploited by manipulations of process pressure and rotor speed. The Stuxnet malware makes for a textbook example how interaction of these layers can be leveraged to create physical destruction by a cyber attack. Visible through the various cyber-physical exploits is the silhouette of a methodology for attack engineering that can be taught in school and can ultimately be implemented in algorithms.

While offensive forces will already have started to understand and work with this methodology, defensive forces did not – lulling themselves in the theory that Stuxnet was so specifically crafted to hit just one singular target that is so different from common critical infrastructure installations. Such thinking displays deficient capability for abstraction. While the attack was highly specific, attack tactics and technology are not; they are generic and can be used against other targets as well. Assuming that these tactics would not be utilized by follow-up attackers is as naïve as assuming that history’s first DDoS attack, first botnet, or first self-modifying attack code would remain singular events, tied to their respective original use case. At this time, roughly 30 nations employ offensive cyber programs, including North Korea, Iran, Syria, and Tunisia. It should be taken for granted that every serious cyber warrior will copy techniques and tactics used in history’s first true cyber weapon. It should therefore be a priority for defenders to understand those techniques and tactics equally well, if not better.

Exploring the Attack Vector

Unrecognized by most who have written on Stuxnet, the malware contains two strikingly different attack routines. While literature on the subject has focused almost exclusively on the smaller and simpler attack routine that changes the speeds of centrifuge rotors, the “forgotten” routine is about an order of magnitude more complex and qualifies as a plain nightmare for those who understand industrial control system security. Viewing both attacks in context is a prerequisite for understanding the operation and the likely reasoning behind the scenes.

Both attacks aim at damaging centrifuge rotors, but use different tactics. The first (and more complex) attack attempts to over-pressurize centrifuges, the second attack tries to over-speed centrifuge rotors and to take them through their critical (resonance) speeds.

Overpressure Attack: Silent Hijack of the Crown Jewels

In 2007, an unidentified person submitted a sample of code to the collaborative anti-virus platform Virustotal that much later turned out as the first variant of Stuxnet that we know of. Whilst not understood by any anti-virus company at the time, that code contained a payload for severely interfering with the Cascade Protection System (CPS) at the Natanz Fuel Enrichment Plant.

Iran’s low-tech approach to uranium enrichment

The backbone of Iran’s uranium enrichment effort is the IR-1 centrifuge which goes back to a European design of the late Sixties / early Seventies that was stolen by Pakistani nuclear trafficker A. Q. Khan. It is an obsolete design that Iran never managed to operate reliably. Reliability problems may well have started as early as 1987, when Iran began experimenting with a set of decommissioned P-1 centrifuges acquired from the Khan network. Problems with getting the centrifuge rotors to spin flawlessly will also likely have resulted in the poor efficiency that can be observed when analyzing IAEA reports, suggesting that the IR-1 performs only half as well – best case – as it could theoretically. A likely reason for such poor performance is that Iran reduced the operating pressure of the centrifuges in order to lower rotor wall pressure. But less pressure means less throughput – and thus less efficiency.

As unreliable and inefficient as the IR-1 is, it offered a significant benefit: Iran managed to produce the antiquated design at industrial scale. It must have seemed striking to compensate reliability and efficiency by volume, accepting a constant breakup of centrifuges during operation because they could be manufactured faster than they crashed. Supply was not a problem. But how does one use thousands of fragile centrifuges in in a sensitive industrial process that doesn’t tolerate even minor equipment hiccups? In order to achieve that, Iran uses a Cascade Protection System which is quite unique as it is designed to cope with ongoing centrifuge trouble by implementing a crude version of fault tolerance. The protection system is a critical system component for Iran’s nuclear program as without it, Iran would not be capable of sustained uranium enrichment.

The inherent problem in the Cascade Protection System and its workaround

The Cascade Protection System consists of two layers, the lower layer being at the centrifuge level. Three fast-acting shut-off valves are installed for every centrifuge at the connectors of centrifuge piping and enrichment stage piping. By closing the valves, centrifuges that run into trouble – indicated by vibration – can be isolated from the stage piping. Isolated centrifuges are then run down and can be replaced by maintenance engineers while the process keeps running.

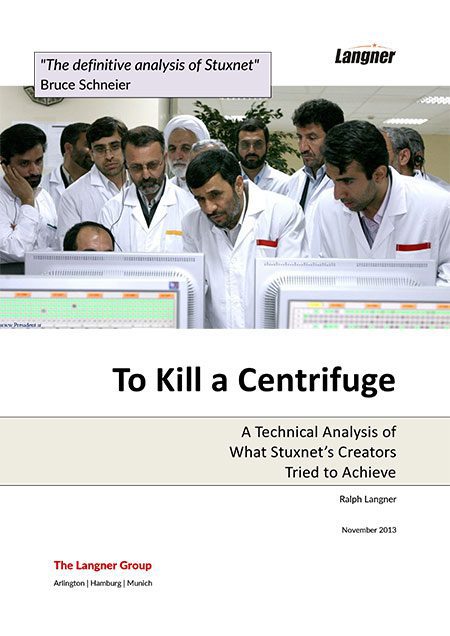

The central monitoring screen of the Cascade Protection System, which is discussed in detail in the last section of this paper, shows the status of each centrifuge within a cascade – running or isolated – as either a green or a grey dot. Grey dots are nothing special on the control system displays at Natanz and even appear in the official press photos shot during former president Ahmadinejad’s visit to Natanz in 2008. It must have appeared normal to see grey dots, as Iran was used to rotor trouble since day one. While no Western plant manager would have cleared such photographic material for publication, Iran didn’t seem to bother to hide that fact from the media. To the contrary, there might have been a sense of pride involved by showing a technological achievement that allowed for tolerating centrifuge failure.

But the isolation valves can turn into as much of a problem as a solution. When operating basically unreliable centrifuges, one will see shut-offs frequently, and maintenance may not have a chance to replace damaged centrifuges before the next one in the same enrichment stage gets isolated. Once that multiple centrifuges are shut off within the same stage, UF6 gas pressure – the most sensitive parameter in uranium enrichment using centrifuges – will increase, which can and will lead to all kinds of problems.

Iran found a creative solution for this problem – basically another workaround on top of the first workaround. For every enrichment stage, an exhaust valve is installed that allows for compensation of overpressure. By opening the valve, overpressure is relieved into the dump system. A dump system is present in any gas centrifuge cascade used for uranium enrichment but never used in production mode; it simply acts as a backup in case of cascade trips when the centrifuges must be evacuated and the “normal” procedure to simply use the tails take-off is unavailable for whatever reason. Iran discovered they can use (or abuse) the dump system to compensate stage overpressure. For every enrichment stage, pressure (controlling variable) is monitored by a pressure sensor. If that pressure exceeds a certain setpoint, the stage exhaust valve (controlled variable) is opened, and overpressure is released into the dump system until normal operating pressure is re-established – basic downstream control as known from other applications of vacuum technology.

The downstream control architecture with an exhaust valve per stage was most likely not acquired from the Khan network as Pakistan may not have needed it; apparently they never experienced a similar amount of unreliability. The control system technology used at Natanz did not exist back in the Eighties when Pakistan had its biggest success in uranium enrichment. The specification for the PROFIBUS fieldbus, a realtime micro-network for attaching field devices to controllers, was first published in 1993, and the controllers used for the CPS (Siemens S7-417) were introduced to the market not earlier than 1999. However, there is no evidence of a close relation between Iran and the Khan network after 1994. Lead time for the adoption of new technology such as PROFIBUS in the automation space with its extremely long lifecycles is around ten years as asset owners are reluctant to invest in new technology until it is regarded “proven” industry standard, making it unlikely that anybody would have used the new fieldbus technology for production use in critical facilities before the early years of the new millennium, just when the Khan network was shut down. But in 1998 Pakistan had already successfully tested their first nuclear weapon, obviously without the help of the new fieldbus and control technology from the German industry giant.

What we do know is that when Iran got serious about equipping the Natanz site in the early years of the new millennium, they ran into technical trouble. In October 2003, the EU3 (Britain, Germany, and France) requested that Iran suspend their enrichment activities “for a period of time” as a confidence-building measure. Iranian chief negotiator Hassan Rowhani, now president of Iran, told the EU3 that Iran agreed to a suspension “for as long as we deem necessary”. Two years later, Rowhani clarified that the suspension had only been accepted in areas where Iran did not experience technical problems. In 2006, Iran didn’t deem the hiatus no longer “necessary” for the simple reason that they had overcome their technical trouble. This became evident when the IAEA seals at the cascades were broken and production resumed. It can be speculated that the fine-tuned pressure control that the stage exhaust valves provide was designed between 2003 and 2006.

The SCADA software (supervisory control and data acquisition, basically an IT application for process monitoring by operators) for the CPS also appears to be a genuine development for the Natanz Fuel Enrichment Plant. To put it quite frankly, its appearance is quite amateurish and doesn’t indicate signs of the many man-years of Pakistani experience. Anything “standard” that would indicate software maturity and an experienced software development team is missing. It appears like work in progress of software developers with little background in SCADA. With Iran understanding the importance of the control system for the protection system, a reasonable strategy would have been to keep development and product support in trusted domestic hands.

Messing up Iran’s technology marvel

The cyber attack against the Cascade Protection System infects Siemens S7-417 controllers with a matching configuration. The S7-417 is a top-of-the-line industrial controller for big automation tasks. In Natanz, it is used to control the valves and pressure sensors of up to six cascades (or 984 centrifuges) that share common feed, product, and tails stations.

Immediately after infection the payload of this early Stuxnet variant takes over control completely. Legitimate control logic is executed only as long as malicious code permits it to do so; it gets completely de-coupled from electrical input and output signals. The attack code makes sure that when the attack is not activated, legitimate code has access to the signals; in fact it is replicating a function of the controller’s operating system that would normally do this automatically but was disabled during infection. In what is known as a man-in-the-middle scenario in cyber security, the input and output signals are passed from the electrical peripherals to the legitimate program logic and vice versa by attack code that has positioned itself “in the middle”.

Things change after activation of the attack sequence, which is triggered by a combination of highly specific process conditions that are constantly monitored by the malicious code. Then, the much-publicized manipulation of process values inside the controller occur. Process input signals (sensor values) are recorded for a period of 21 seconds. Those 21 seconds are then replayed in a constant loop during the execution of the attack, and will ultimately show on SCADA screens in the control room, suggesting normal operation to human operators and any software-implemented alarm routines. During the attack sequence, legitimate code continues to execute but receives fake input values, and any output (actuator) manipulations of legitimate control logic no longer have any effect.

When the actual malicious process manipulations begin, all isolation valves for the first two and the last two enrichment stages are closed, thereby blocking the product and tails outflow of process gas of each affected cascade. From the remaining centrifuges, more centrifuges are isolated, except in the feed stage. The consequence is that operating pressure in the non-isolated centrifuges increases as UF6 continues to flow into the centrifuge via the feed, but cannot escape via the product and tails take-offs, causing pressure to rise continuously.

At the same time, stage exhaust valves stay closed so that overpressure cannot be released to the dump line. But that is easier said than done because of the closed-loop implementation of the valve control. The valves’ input signals are not attached directly to the main Siemens S7-417 controllers but by dedicated pressure controllers that are present once per enrichment stage. The pressure controllers have a configurable setpoint (threshold) that prompts for action when exceeded, namely to signal the stage exhaust valve to open until the measured process pressure falls below that threshold again. The pressure controllers must have a data link to the Siemens S7-417 which enables the latter to manipulate the valves. With some uncertainty left we assume that the manipulation didn’t use direct valve close commands but a de-calibration of the pressure sensors.

Pressure sensors are not perfect at translating pressure into an analog output signal, but their errors can be corrected by calibration. The pressure controller can be told what the “real” pressure is for given analog signals and then automatically linearize the measurement to what would be the “real” pressure. If the linearization is overwritten by malicious code on the S7-417 controller, analog pressure readings will be “corrected” during the attack by the pressure controller, which then interprets all analog pressure readings as perfectly normal pressure no matter how high or low their analog values are. The pressure controller then acts accordingly by never opening the stage exhaust valves. In the meantime, actual pressure keeps rising. The sensors for feed header, product take-off and tails take-off needed to be compromised as well because with the flow of process gas blocked, they would have shown critical high (feed) and low (product and tails) pressure readings, automatically closing the master feed valves and triggering an alarm. Fortunately for the attackers, the same tactic could be used for the exhaust valves and the additional pressure transducers, numbered from 16 to 21 in the facility and in the attack code, as they use the same products and logic. The detailed pin-point manipulations of these sub-controllers indicate a deep physical and functional knowledge of the target environment; whoever provided the required intelligence may as well know the favorite pizza toppings of the local head of engineering.

The attack continues until the attackers decide that enough is enough, based on monitoring centrifuge status, most likely vibration sensors, which suggests a mission abort before the matter hits the fan. If the idea was catastrophic destruction, one would simply have to sit and wait. But causing a solidification of process gas would have resulted in simultaneous destruction of hundreds of centrifuges per infected controller. While at first glance this may sound like a goal worthwhile achieving, it would also have blown cover since its cause would have been detected fairly easily by Iranian engineers in post mortem analysis. The implementation of the attack with its extremely close monitoring of pressures and centrifuge status suggests that the attackers instead took great care to avoid catastrophic damage. The intent of the overpressure attack was more likely to increase rotor stress, thereby causing rotors to break early – but not necessarily during the attack run.

Nevertheless, the attackers faced the risk that the attack might not work at all because it is so over-engineered that even the slightest oversight – or any configuration change – would have resulted in zero impact or, worst case, in a program crash that would have been detected by Iranian engineers quickly. It is obvious and documented later in this paper that over time Iran did change several important configuration details such as the number of centrifuges and enrichment stages per cascade, all of which would have rendered the overpressure attack useless; a fact that the attackers must have anticipated.

Rotor Speed Attack: Pushing the Envelope

Whatever the effect of the overpressure attack was, the attackers decided to try something different in 2009. That may have been motivated by the fact that the overpressure attack was lethal just by accident, that it didn’t achieve anything, or – that somebody simply decided to check out something new and fresh.

The new variant that was not discovered until 2010 was much simpler and much less stealthy than its predecessor. It also attacked a completely different component: the Centrifuge Drive System (CDS) that controls rotor speeds. The attack routines for the overpressure attack were still contained in the payload, but no longer executed – a fact that must be viewed as deficient OPSEC. It provided us by far the best forensic evidence for identifying Stuxnet’s target, and without the new, easy-to-spot variant the earlier predecessor may never have been discovered. That also means that the most aggressive cyber-physical attack tactics would still be unknown to the public – unavailable for use in copycat attacks, and unusable as a deterrent display of cyber power.

Bringing in the infosec cavalry

Stuxnet’s early version had to be physically installed on a victim machine, most likely a portable engineering system, or it could have been passed on a USB stick carrying an infected configuration file for Siemens controllers. Once that the configuration file was opened by the vendor’s engineering software, the respective computer was infected. But no engineering software to open the malicious file, equals no propagation.

That must have seemed to be insufficient or impractical for the new version, as it introduced a method of self-replication that allowed it to spread within trusted networks and via USB sticks even on computers that did not host the engineering software application. The extended dropper suggests that the attackers had lost the capability to transport the malware to its destination by directly infecting the systems of authorized personnel, or that the Centrifuge Drive System was installed and configured by other parties to which direct access was not possible. The self-replication would ultimately even make it possible to infiltrate and identify potential clandestine nuclear sites that the attackers didn’t know about.

All of a sudden, Stuxnet became equipped with the latest and greatest MS Windows exploits and stolen digital certificates as the icing on the cake, allowing the malicious software to pose as legitimate driver software and thus not be rejected by newer versions of the Windows operating system. Obviously, organizations had joined the club that have a stash of zero-days to choose from and could pop up stolen certificates just like that. Whereas the development of the overpressure attack can be viewed as a process that could be limited to an in-group of top notch industrial control system security experts and coders who live in an exotic ecosystem quite remote from IT security, the circle seems to have gotten much wider, with a new center of gravity in Maryland. It may have involved a situation where the original crew is taken out of command by a casual “we’ll take it from here” by people with higher pay grades. Stuxnet had arrived in big infosec.

But the use of the multiple zero-days came with a price. The new Stuxnet variant was much easier to identify as malicious software than its predecessor as it suddenly displayed very strange and very sophisticated behavior at the IT layer. In comparison, the dropper of the initial version looked pretty much like a legitimate or, worst case, pirated Step7 software project for Siemens controllers; the only strange thing was that a copyright notice and license terms were missing. Back in 2007, one would have to use extreme forensic efforts to realize what Stuxnet was all about – and one would have to specifically look for it, which was out of everybody’s imagination at the time. The newer version, equipped with a wealth of exploits that hackers can only dream about, signaled even the least vigilant anti-virus researcher that this was something big, warranting a closer look. That happened in 2010 when a formerly not widely known Belarusian anti-virus company called VirusBlokAda practically stumbled over the malware and put it on the desk of the AV industry.

A new shot at cracking rotors

Centrifuge rotors – the major fragility in a gas centrifuge – have more than one way to run into trouble. In the later Stuxnet variant, the attackers explored a different path to tear them apart: Rotor velocity. Any attempt to overpressure centrifuges is dormant in the new version, and if on some cascades the earlier attack sequence would still execute when the rotor speed attack sequence starts, no coordination is implemented. The new attack is completely independent from the older one, and it manipulates a completely different control system component: The Centrifuge Drive System.

That system is not controlled by the same S7-417 controllers, but by the much smaller S7-315. One S7-315 controller is dedicated to the 164 drives of one cascade (one drive per centrifuge). The cascade design using 164 centrifuges assembled in four lines and 43 columns had been provided by A. Q. Khan and resembles the Pakistani cascade layout. Every single centrifuge comes with its own motor at the bottom of the centrifuge, a highly stable drive that can run at speeds up to 100,000 rpm with constant torque during acceleration and deceleration. Such variable-frequency drives cannot be accessed directly by a controller but require the use of frequency converters; basically programmable power supplies that allow for the setting of specific speeds by providing the motor an AC current with a frequency as requested by the controller using digital commands. Frequency converters are attached to a total of six PROFIBUS segments for technical limitations of the fieldbus equipment (one PROFIBUS segment couldn’t serve all frequency converters), all of which end at communication processors (CPs) that are attached to the S7-315 CPU’s backplane. So while the attack code running on the S7-315 controller also talks to groups of six target sets (rotor control groups), there is no linkage whatsoever to the six target sets (cascades) of the overpressure attack that executes on the S7-417.

The attack code suggests that the S7-315 controllers are connected to a Siemens WinCC SCADA system for monitoring drive parameters. Most likely, an individual WinCC instance services a total of six cascades. However, on the video and photographic footage of the control rooms at Natanz no WinCC screen could be identified. This doesn’t necessarily mean that the product is not used; installations might be placed elsewhere, for example on operator panels inside the cascade hall.

Keep it simple, stupid

Just like in the predecessor, the new attack operates periodically, about once per month, but the trigger condition is much simpler. While in the overpressure attack various process parameters are monitored to check for conditions that might occur only once in a blue moon, the new attack is much more straightforward.

The new attack works by changing rotor speeds. With rotor wall pressure being a function of process pressure and rotor speed, the easy road to trouble is to over-speed the rotors, thereby increasing rotor wall pressure. Which is what Stuxnet did. Normal operating speed of the IR-1 centrifuge is 63,000 rpm, as disclosed by A. Q. Khan himself in his 2004 confession. Stuxnet increases that speed by a good one-third to 84,600 rpm for fifteen minutes, including the acceleration phase which will likely take several minutes. It is not clear if that is hard enough on the rotors to crash them in the first run, but it seems unlikely – even if just because a month later, a different attack tactic is executed, indicating that the first sequence may have left a lot of centrifuges alive, or at least more alive than dead. The next consecutive run brings all centrifuges in the cascade basically to a stop (120 rpm), only to speed them up again, taking a total of fifty minutes. A sudden stop like “hitting the brake” would predictably result in catastrophic damage, but it is unlikely that the frequency converters would permit such radical maneuver. It is more likely that when told to slow down, the frequency converter smoothly decelerates just like in an isolation / run-down event, only to resume normal speed thereafter. The effect of this procedure is not deterministic but offers a good chance of creating damage. The IR-1 is a supercritical design, meaning that operating speed is above certain critical speeds which cause the rotor to vibrate (if only briefly). Every time a rotor passes through these critical speeds, also called harmonics, it can break.

If rotors do crack during one of the attack sequences, the Cascade Protection System would kick in, isolate and run down the respective centrifuge. If multiple rotors crashed (very likely), the resulting overpressure in the stage would be compensated by the exhaust valves. Once that this would no longer be possible, for example because all centrifuges in a single stage have been isolated, a contingency dump would occur, leaving Iranian operators left with the question why all of a sudden so many centrifuges break at once. Not that they didn’t have enough new ones in stock for replacement, but unexplained problems like this are any control system engineer’s most frustrating experiences, usually referred to as chasing a demon in the machine.

Certainly another piece of evidence that catastrophic destruction was not intended is the fact that no attempts had been made to disable the Cascade Protection System during the rotor speed attack, which would have been much easier than the delicate and elaborate overpressure attack. Essentially it would only have required a very small piece of attack code from the overpressure attack that was implemented already.

OPSEC becomes less of a concern

The most common technical misconception about Stuxnet that appears in almost every publication on the malware is that the rotor speed attack would record and play back process values by means of the recording and playback of signal inputs that we uncovered back in 2010 and that is also highlighted in my TED talk. Slipping the attention of most people writing about Stuxnet, this particular and certainly most intriguing attack component is only used in the overpressure attack. The S7-315 attack against the Centrifuge Drive System simply doesn’t do this, and as implemented in the CPS attack it wouldn’t even work on the smaller controller for technical reasons. The rotor speed attack is much simpler. During the attack, legitimate control code is simply suspended. The attack sequence is executed, thereafter a conditional BLOCK END directive is called which tells the runtime environment to jump back to the top of the main executive that is constantly looped on the single-tasking controller, thereby re-iterating the attack and suspending all subsequent code.

The attackers did not care to have the legitimate code continue execution with fake input data most likely because it wasn’t needed. Centrifuge rotor speed is constant during normal operation; if shown on a display, one would expect to see static values all the time. It is also a less dramatic variable to watch than operating pressure because rotor speed is not a controlled variable; there is no need to fine-tune speeds manually, and there is no risk that for whatever reason (short of a cyber attack) speeds would change just like stage process pressure. Rotor speed is simply set and then held constant by the frequency converter.

If a SCADA application did monitor rotor speeds by communicating with the infected S7-315 controllers, it would simply have seen the exact speed values from the time before the attack sequence executes. The SCADA software gets its information from memory in the controller, not by directly talking to the frequency converter. Such memory must be updated actively by the control logic, reading values from the converter. However if legitimate control logic is suspended, such updates no longer take place, resulting in static values that perfectly match normal operation.

Nevertheless, the implementation of the attack is quite rude; blocking control code from execution for up to an hour is something that experienced control system engineers would sooner or later detect, for example by using the engineering software’s diagnostic features, or by inserting code for debugging purposes. Certainly they would have needed a clue that something was at odds with rotor speed. It is unclear if post mortem analysis provided enough hints; the fact that both overspeed and transition through critical speeds were used certainly caused disguise. However, at some point in time the attack should have been recognizable by plant floor staff just by the old ear drum. Bringing 164 centrifuges or multiples thereof from 63,000 rpm to 120 rpm and getting them up to speed again would have been noticeable – if experienced staff had been cautious enough to remove protective headsets in the cascade hall.

Another indication that OPSEC became flawed can be seen in the SCADA area. As mentioned above, it is unclear if the WinCC product is actually used to monitor the Centrifuge Drive System at Natanz. If it is, it would have been used by Stuxnet to synchronize the attack sequence between up to six cascades so that their drives would simultaneously be affected, making audible detection even easier. And if at some point in time somebody at Natanz had started to thoroughly analyze the SCADA/PLC interaction, they would have realized within hours that something was fishy, like we did back in 2010 in our lab. A Stuxnet-infected WinCC system probes controllers every five seconds for data outside the legitimate control blocks; data that was injected by Stuxnet. In a proper forensic lab setup this produces traffic that simply cannot be missed. Did Iran realize that? Maybe not, as a then-staff member of Iran CERT told me that at least the computer emergency response team did not conduct any testing on their own back in 2010 but was curiously following our revelations.

Summing up, the differences between the two Stuxnet variants discussed here are striking. In the newer version, the attackers became less concerned about being detected. It seems a stretch to say that they wanted to be discovered, but they were certainly pushing the envelope and accepting the risk.

Analysis: The Dynamics of a Cyber Warfare Campaign

Everything has its roots, and the roots of Stuxnet are not in the IT domain but in nuclear counter-proliferation. Sabotaging the Iranian nuclear program had been done before by supplying Iran with manipulated mechanical and electrical equipment. Stuxnet transformed that approach from analog to digital. Not drawing from the same brain pool that threw sand in Iran’s nuclear gear in the past would have been a stupid waste of resources as even the digital attacks required in-depth knowledge of the plant design and operation; knowledge that could not be obtained by simply analyzing network traffic and computer configurations at Natanz. It is not even difficult to identify potential suspects for such an operation; nuclear counter-proliferation is the responsibility of the US Department of Energy and since 1994 also of the Central Intelligence Agency, even though both organizations don’t list sabotage under their official duties.

A low-yield weapon by purpose

Much has been written about the failure of Stuxnet to destroy a substantial number of centrifuges, or to significantly reduce Iran’s LEU production. While that is undisputable, it doesn’t appear that this was the attackers’ intention. If catastrophic damage was caused by Stuxnet, that would have been by accident rather than by purpose. The attackers were in a position where they could have broken the victim’s neck, but they chose continuous periodical choking instead. Stuxnet is a low-yield weapon with the overall intention to reduce the lifetime of Iran’s centrifuges and make their fancy control systems appear beyond their understanding.

Reasons for such tactics are not difficult to identify. When Stuxnet was first deployed, Iran did already master the production of IR-1 centrifuges at industrial scale. It can be projected that simultaneous catastrophic destruction of all operating centrifuges would not have set back the Iranian nuclear program for longer than the two years setback that I have estimated for Stuxnet. During the summer of 2010 when the Stuxnet attack was in full swing, Iran operated about four thousand centrifuges, but kept another five thousand in stock, ready to be commissioned. Apparently, Iran is not in a rush to build up a sufficient stockpile of LEU that can then be turned into weapon-grade HEU but favoring a long-term strategy. A one-time destruction of their operational equipment would not have jeopardized that strategy, just like the catastrophic destruction of 4,000 centrifuges by an earthquake back in 1981 did not stop Pakistan on its way to get the bomb.

While resulting in approximately the same amount of setback for Iran as a brute-force tactic, the low-yield approach offered added value. It drove Iranian engineers crazy in the process, up to the point where they may ultimately end in total frustration about their capabilities to get a stolen plant design from the Seventies running, and to get value from their overkill digital protection system. When comparing the Pakistani and the Iranian uranium enrichment programs, one cannot fail to notice a major performance difference. Pakistan basically managed to go from zero to successful LEU production within just two years in times of a shaky economy, without the latest in digital control technology. The same effort took Iran over ten years, despite the jump-start by the Khan network and abundant money from sales of crude oil. If Iran’s engineers didn’t look incompetent before, they certainly did during Operation Olympic Games (Stuxnet’s alleged operational code name).

The world is bigger than Natanz

The fact that the two major versions of Stuxnet analyzed in this paper differ so dramatically suggests that during the operation, something big was going on behind the scenes. Operation Olympic Games obviously involved much more than developing and deploying a piece of malware, however sophisticated that malware may be. It was a campaign rather than an attack, and it appears like the priorities of that campaign had shifted significantly during its execution.

When we analyzed both attacks in 2010, we first assumed that they were executed simultaneously, maybe with the idea to disable the Cascade Protection System during the rotor speed attack. That turned out wrong; no coordination between the two attacks can be found in code. Then, we assumed that the attack against the Centrifuge Drive System was the simple and basic predecessor after which the big one was launched, the attack against the Cascade Protection System. The Cascade Protection System attack is a display of absolute cyber power. It appeared logical to assume a development from simple to complex. Several years later, it turned out that the opposite is the case. Why would the attackers go back to basics?

The dramatic differences between both versions point to changing priorities that will most likely have been accompanied by a change in stakeholders. Technical analysis shows that the risk of discovery no longer was the attackers’ primary concern when starting to experiment with new ways to mess up operations at Natanz. The shift of attention may have been fueled by a simple insight: Nuclear proliferators come and go, but cyber warfare is here to stay. Operation Olympic Games started as an experiment with unpredictable outcome. Along the road, one result became clear: Digital weapons work. And different from their analog counterparts, they don’t put forces in harm’s way, produce less collateral damage, can be deployed stealthily, and are dirt cheap. The contents of Pandora’s Box had implications much beyond Iran; they made analog warfare look low-tech, brutal, and so Twentieth-Century.

Somebody among the attackers may also have recognized that blowing cover would come with benefits. Uncovering Stuxnet was the end to the operation, but not necessarily the end of its utility. It would show the world what cyber weapons can do in the hands of a superpower. Unlike military hardware, one cannot display USB sticks at a military parade. The attackers may also have become concerned about another nation, worst case an adversary, would be first in demonstrating proficiency in the digital domain – a scenario nothing short of another Sputnik moment in American history. All good reasons for not having to fear detection too much.

If that twist of affairs was intentional is unknown. As with so many human endeavors, it may simply have been an unintended side effect that turned out critical. It changed global military strategy in the 21st century.

Aftermath

Whatever the hard-fact results of Stuxnet were at Ground Zero, apparently they were not viewed as disappointing failure by its creators. Otherwise it would be difficult to explain the fact that New York Times reporter David Sanger was able to find maybe five to ten high-ranking government officials who were eager to boast about the top secret operation and highlight its cleverness. It looked just a little bit too much like eagerly taking credit for it, contradicting the idea of a mission gone wrong badly.

Positive impact was seen elsewhere. Long before the coming-out but after Operation Olympic Games was launched, the US government started investing big time in offensive cyber warfare and the formation of US Cyber Command. The fact is that any consequences of Stuxnet can less be seen in Iran’s uranium enrichment efforts than in military strategy. Stuxnet will not be remembered as a significant blow against the Iranian nuclear program. It will be remembered as the opening act of cyber warfare, especially when viewed in the context of the Duqu and Flame malware which is outside the scope of this paper. Offensive cyber warfare activities have become a higher priority for the US government than dealing with Iran’s nuclear program, and maybe for a good reason. The most significant effects caused by Stuxnet cannot be seen in Natanz but in Washington DC, Arlington, and Fort Meade.

Only the future can tell how cyber weapons will impact international conflict, and maybe even crime and terrorism. That future is burdened by an irony: Stuxnet started as nuclear counter-proliferation and ended up to open the door to proliferation that is much more difficult to control: The proliferation of cyber weapon technology.