OT Security Blog Articles

Insights on Resilience, Vulnerability Management, and More

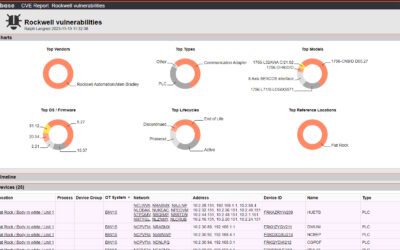

The big OT asset visibility misconception

You have heard it a dozen times: You cannot protect what you don’t see. You need asset visibility! Based on this trivial insight, OT security vendors usually explain how you can achieve such visibility with their products. Let’s check what that actually means in...

Threat-centric vs. infrastructure-centric OT security

There are two general approaches to OT security. One approach is threat-centric and attempts to identify and eliminate cyber threats in OT networks. The other approach is infrastructure-centric and largely doesn’t care about threats. Instead, it attempts to create a...

Compound OT security gains

Guess what, the biggest problem in OT security isn’t the ever changing threat landscape. It’s the inefficiency of popular OT security strategies and processes. Why inefficient? Simply because they don’t pay any long-term dividends. Your OT threat detection efforts...

OT Asset Management in 2024: A product category in its own right

Back in 2017 when we launched the OTbase software product, we were wondering how to label it. The initial idea was to call it an “OT management system”. That seemed to resonate with nobody. We then switched to “OT asset management software”, which was a little more...

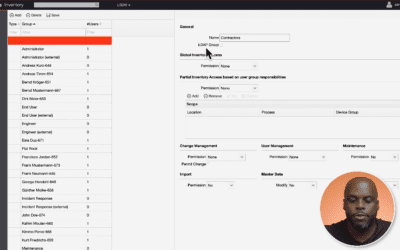

Transform OT User Access Control: A Deep Dive into OTbase Inventory’s Capabilities

With OTbase Inventory, organizations have a comprehensive tool for managing user access in a nuanced and effective manner. It’s not just about limiting access; it’s about optimizing it for operational efficiency.

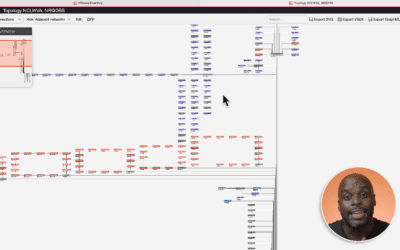

Mastering the Intricacies of OT Network Topology: A Guide to Simplifying Complexity

With tools like OTbase Inventory, the management of network topology in OT has reached a new level of sophistication. It’s not just about visualizing networks; it’s about gaining actionable insights for effective management.

Why tracking OT Asset Health is even more important than network anomaly detection

In the evolving landscape of Operational Technology (OT), asset management has transitioned from being an operational necessity to a strategic advantage.

The OT cyber risk they didn’t tell you about

When you have spent some time in operations technology in the last decade, it is practically impossible that you hadn’t been informed about cyber risk: The risk of falling victim to evil hackers who have no problem messing up your vulnerable-ridden OT infrastructure...

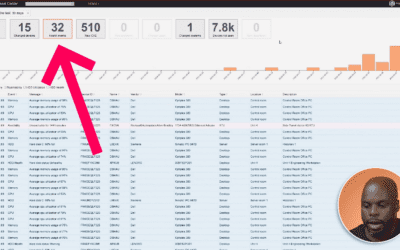

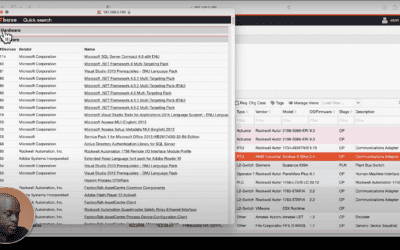

Struggling to Find Your OT Assets? Speed Up Your Search with OTbase Quick Search

In our previous blog post, we explored how OTbase Asset Management Software helps you match CVE data to your devices. Today, we're diving into another powerful feature of OTbase Asset Inventory: Quick Search. As demonstrated in this video tutorial, Quick Search is the...